Jonny Naylor, VP Content

In The Wharton Current’s most recent podcast, Co-President Lilly Chadwick sat down with Aroon Vijaykar, SVP of Strategy and Commercial at EmeraldAI, an exciting early-stage startup “focused on delivering larger, faster and cheaper interconnections for data centers”. But why do these energy-related challenges even exist? To help listeners get the most out of this episode, we provide a short primer on AI’s energy needs, and the difficulties utilities currently face in meeting them, below.

Artificial intelligence is creating large and geographically concentrated loads that stress parts of the power system long before the nation runs out of energy overall. Federal analyses estimate that U.S. data centers consumed about 4.4 percent of national electricity in 2023, with scenarios that reach 6.7 to 12 percent by 2028. At the same time, the Energy Information Administration projects record U.S. electricity consumption in 2025 and 2026, ending a decade of flatline demand. These trends frame why utilities view AI as both opportunity and reliability challenge.

Why AI is difficult for utilities

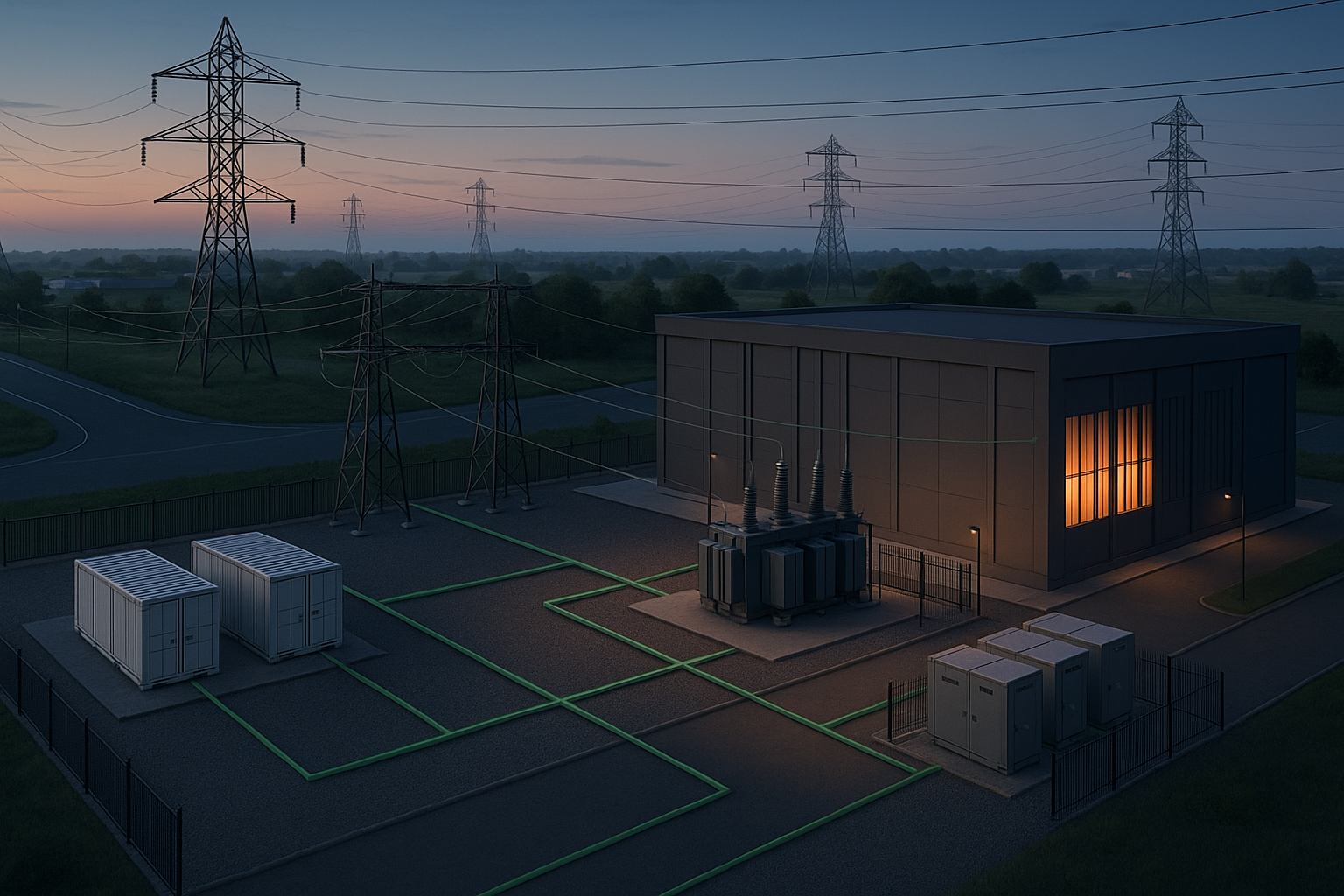

The first difficulty is temporal. Planning, permitting, and constructing grid infrastructure typically requires several years. By contrast, AI campuses often seek tens to hundreds of megawatts at a single interconnection point on a one to two year timeline. Even when a region has adequate generation in aggregate, local transmission and distribution limits can bind at the specific substation where a project wishes to connect.

The second difficulty is spatial. AI demand clusters near fiber backbones, established cloud campuses, and favorable land or tax regimes. These clusters can saturate transformer ratings, feeder thermal limits, or voltage performance margins. System adequacy does not guarantee nodal feasibility.

The third difficulty is operational. Traditional enterprise IT loads have generally been relatively constant. AI mixes real time inference with schedulable training and batch analytics. Without explicit coordination, the composite load looks like a persistent industrial customer that sometimes ramps with human activity. This behavior can coincide with regional or local peaks and with contingency risk hours that concern operators.

Why the grid looks underutilized

A modern power system is built for the worst credible hour and the next failure, not for the daily average. Apparent underutilization is therefore intentional. Three planning concepts explain this.

Peak versus average: The capacity requirement is set by a small fraction of hours near the coincident system peak. Building only to average load would cause involuntary curtailment during extreme weather or synchronized behavior.

Reserve margin and operating reserves: Planners maintain dependable capacity above forecast peak to cover uncertainty, maintenance, and contingencies. Operating reserves, including spinning reserve, provide fast response to imbalance events. North American standards require the system to meet performance criteria following a range of contingencies, including loss of a single element and, in some studies, sequential events. This security philosophy is codified in NERC’s Transmission System Planning Performance Requirements.

Transmission and distribution headroom: Lines, transformers, and substations have continuous and emergency thermal ratings and must also satisfy protection and voltage stability constraints. Operating well below ratings on mild days preserves headroom to withstand a credible contingency without cascading failures. This visible headroom is not waste; it is the buffer that enables security during the rare but critical hours that determine reliability outcomes, such as a major failure or other extreme event.

The implication is that a region may have ample total generation and still be unable to accommodate a new 100 megawatt interconnection at a specific node without upgrades, because reliability criteria and thermal limits govern what can be delivered where and when.

Definitions key to understanding the problem

Peak load or peak demand: The highest load during a specified period that sets capacity needs.

Capacity: The maximum instantaneous power deliverable by a generator, line, transformer, or substation, measured in megawatts.

Capacity factor: The ratio of actual energy produced over a period to the energy that could have been produced at continuous full power.

Reserve margin: Dependable capacity in excess of forecast peak maintained to cover uncertainty and contingencies.

Operating reserves: Fast responding capacity used to correct short term deviations.

Spinning reserves: Online, synchronized generating capacity that is intentionally kept unloaded so it can increase output almost immediately after a disturbance.

Rate base: The value of utility assets used to provide service, net of accumulated depreciation and adjusted for items such as working capital and deferred taxes. Commissions allow the utility to earn an authorized return on this value as part of revenue requirements.

Latency: The elapsed time between a request and a response in computing. Latency tolerance determines how far a workload can be shifted in time or location without violating service obligations.

Demand response: Changes in load in response to price or reliability signals that reduce or shift consumption during constrained hours.

Compute: the processing capacity used to train or serve AI models, quantified in floating-point operations (FLOPs) and processing rate FLOP/s; in practice it is often budgeted as accelerator-hours (e.g., GPU-hours) and throughput as tokens per second.

What AI data centers do and why that matters for power

AI workloads fall into categories with distinct power and flexibility characteristics.

Training: Foundation model training and large scale fine tuning run for hours to weeks on tightly coupled accelerator clusters. Throughput and job completion drive value more than millisecond response. With appropriate checkpointing and orchestration, these jobs can be slowed, paused, or shifted across hours and locations. From a grid perspective, training resembles a controllable industrial process with high energy intensity and meaningful temporal flexibility.

Inference: Serving model outputs to end users or downstream applications is latency constrained. Autoscaling, batching, and caching can smooth demand, but there is limited tolerance for shifting across hours. Inference tends to track human activity and can align with evening peaks on distribution networks.

Offline inference and analytics: Periodic scoring and analytics occupy a middle ground. These tasks are throughput oriented and often schedulable within daily or weekly windows, which allows alignment with off-peak periods or hours of high renewable output.

A site that distinguishes and manages these classes can offer verifiable demand flexibility during the small number of hours that matter most for reliability, while maintaining service level commitments.

Flexibility as an interconnection tool

Regional transmission organizations and utilities focus on the relatively few hours each year when constraints bind. If a large load can demonstrate reliable curtailment or shifting during those hours, the expected impact on peak and contingency headroom falls substantially. That can unlock larger and faster interconnections and reduce the need to overbuild network capacity. The public interest rationale is clear. Earlier access to power supports innovation and economic growth. Verified flexibility reduces rate base growth driven by worst case assumptions, which protects ratepayers from unnecessary cost escalation. EIA’s forward demand outlook underscores why these tools matter now, as historic load growth returns.

Flexibility must be credible. Practical implementations connect data center workload managers to grid signals that include real time and day ahead prices, congestion risk, curtailment notices, and reliability notifications. Controls translate those signals into site specific actions such as

dynamic admission control for training clusters, batch scheduling windows, geographic workload placement, and limited use of onsite energy storage to shave peaks. Measurement and verification allow utilities to count these actions in interconnection studies and, where applicable, to compensate performance through market programs for demand response and operating reserves.

Cost allocation and regulatory context

When a new customer triggers upgrades, regulators must determine how costs are recovered. Some investments are placed in the rate base and recovered broadly. Others are directly assigned to the interconnecting customer through upfront contributions or special contracts. The core principle is cost causation with prudence review. A clear definition of rate base and its components helps stakeholders understand how flexibility that reduces peak contribution can defer or resize network investments and thereby alter revenue requirements.

Conclusion

The perception of an underutilized grid arises from its design objective. Power systems are engineered to satisfy the worst hour and to ride through credible failures. AI demand stresses that design at specific nodes and times, not necessarily at the system average. The most effective near term response is to align compute scheduling and siting with power system physics, distinguish workloads by latency and flexibility, coordinate with grid signals, verify performance, and apply cost allocation that reflects actual peak impact. Done well, this approach accelerates interconnections, protects reliability, and limits unnecessary additions to rate base even as total electricity consumption reaches new highs.